Test Artifacts: Does Your Project Really Need All of Them?

Testing is an essential part of the software development process, as it helps ensure that a software application is reliable, functional, and meets the needs of its users.

To support testing, many projects create various testing artifacts, such as test cases, test plans, and test reports. However, it's important to consider whether a project really needs all of these testing artifacts, as they can take time and resources to create and maintain. In this post, we'll explore the different types of testing artifacts and consider when they might be necessary for a project.

What are Test Artifacts in Software Testing?

Test artifacts (also known as test deliverables) refer to the documents and other materials that are produced or used during the testing process. These artifacts can be used to provide evidence of the testing that has been performed, to communicate the results of the testing to stakeholders, to provide a clear and comprehensive picture of the testing that has been performed, as well as to ensure that the system under test is of high quality and ready for deployment.

Testing Artifacts Description

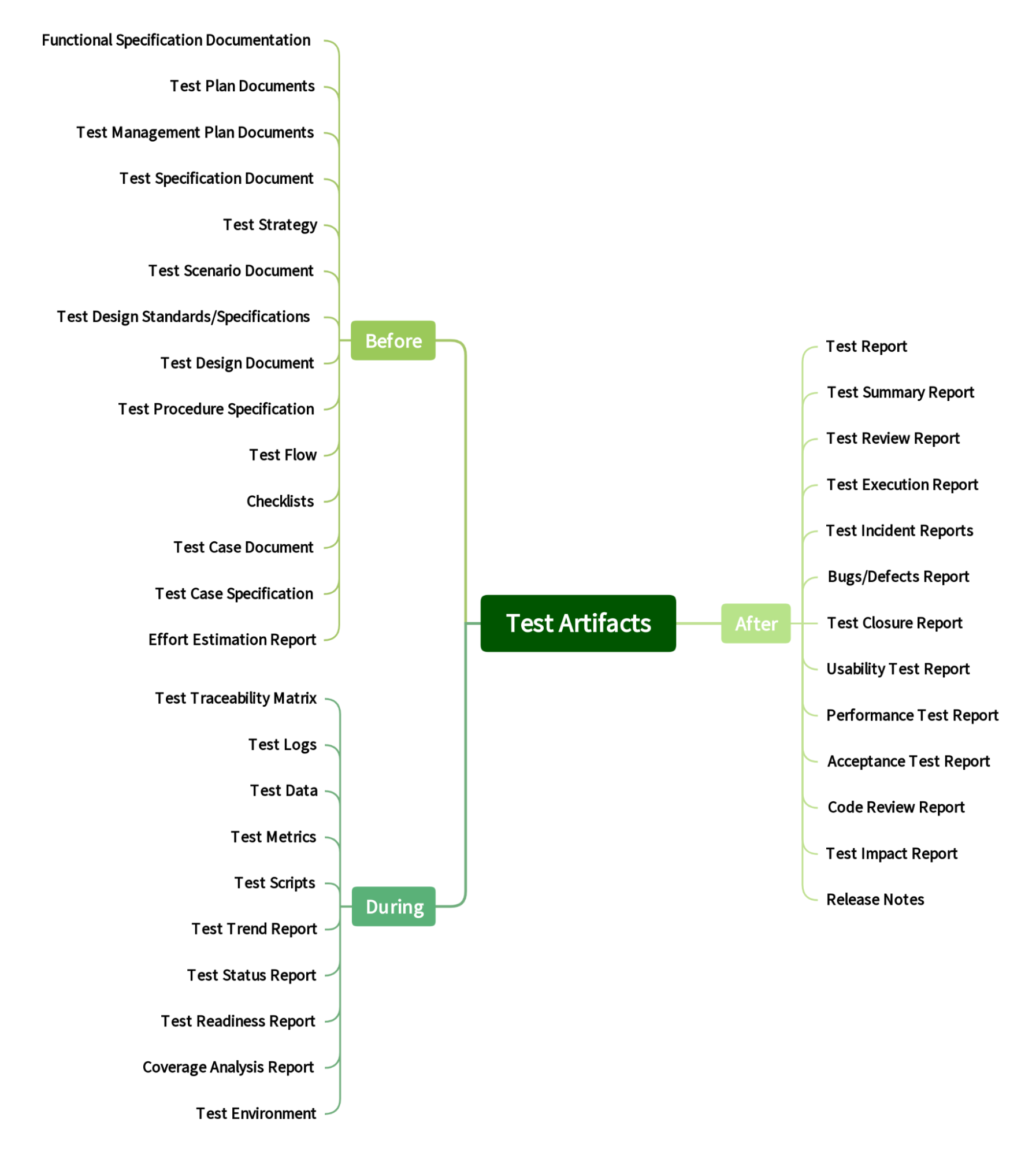

A figure presents deliverables that are produced or delivered as part of a testing process (before, during, and after testing efforts). These deliverables include documents, reports, and other materials that are used to document and communicate the results of testing activities. Some common examples of test deliverables include test plans, test cases, test scripts, test reports, and defect reports. All of these items play an important role in ensuring the quality and reliability of a product or system, and they are typically created and reviewed by a team of testers and quality assurance professionals.

Taxonomy of Test Artifacts

Let's present a brief description and purpose of the identified test artifacts after the survey.

- Functional Specification Documentation: A document that provides a clear and detailed description of the system's intended behavior, which can be used to create test cases and validate that the system is functioning correctly.

- Test Plan Documents: Documents that outline the approach and strategies that will be used to test the software. The test plan typically includes details on the scope of testing, the types of tests that will be performed, the resources required, and the schedule for testing.

- Test Management Plan Documents: A document that outlines the approach, objectives, and resources required for testing activities within a project; should be developed in conjunction with the overall project plan, and should take into account the specific testing needs of the project, including the types of testing to be performed, the test environment, and the roles and responsibilities of the testing team.

- Test Specification Document: A document that is aimed to provide a clear and comprehensive overview of the testing plan, and to serve as a reference point for the testing team.

- Test Strategy: A document that outlines the approach that will be taken to test a product or system. It is typically a high-level document that provides an overview of the testing efforts and helps to ensure that testing is thorough and consistent.

- Test Scenario Document: A document that outlines the specific steps and conditions that need to be followed in order to test a particular feature or functionality of a software.

- Test Design Standards/Specifications: This type of documentation provides a common framework for designing and conducting tests, and helps to ensure that tests are reliable, valid, and objective.

- Test Design Document: A document that provides a detailed plan for executing the tests and verifying that the software meets the required specifications.

- Test Procedure Specification: A document that outlines the steps and methods that will be followed to test a product or system. It is a detailed plan that defines the scope, objectives, resources, and approach for testing.

- Test Flow: A document that outlines the steps and procedures to be followed in order to test a specific feature or functionality of a product.

- Checklist: A type of document that lists items that need to be checked or reviewed; provides a clear and structured way of documenting the tests that have been performed and the results of those tests.

- Test case document: A document that describes a set of tests that are conducted to verify that a software system or component meets specified requirements

- Test Case Specification (also known as a test design specification): A document that describes the details of a test case.

- Effort Estimation Report: A document that outlines the estimated amount of time and resources that will be required to complete a testing of project or task.

- Installation and Configuration Guide: A document that provides step-by-step instructions for installing and configuring a software application or system.

- Traceability Matrix: A tool that can be used to trace the requirements of a system or product to the corresponding test cases that have been created to validate those requirements; is often used to ensure that all requirements have been fully tested and to identify any gaps in testing coverage.

- Test Log: a record of the testing activities that have been performed on a software system, including the test cases that were run, the results of those tests, and any defects or issues that were identified.

- Test Data: Any data or inputs used to execute test cases, such as test cases, test scenarios, or test scripts.

- Test Metrics: Data and statistics that provide insight into the effectiveness of the testing process, including the number of tests that were run, the number of defects found, and the time and resources required to complete the tests.

- Test Script: A step-by-step set of instructions for executing a test case, usually written in a programming language or using a test automation tool.

- Test Trend Report: A document that is used to monitor the progress of testing through time. This document contains information about the testing progress of the product over several releases.

- Test Status Report: A document that provides information about the progress, status, and results of testing activities for a project.

- Test Readiness Report: A document that provides an overview of the status of testing activities within a project.

- Coverage Analysis Report: A document that provides visibility into the extent to which a software application has been tested, as well as to identify areas that may have been insufficiently tested.

- Test Environment: The hardware, software, and other resources required to execute tests, such as a test server or test devices.

- Test Report: A document that summarizes the results of a testing effort, including the number of test cases executed, the number of defects found, and any other relevant metrics or observations.

- Test Plan Report: A document that summarizes the results of the testing activities described in the test plan.

- Test Summary: A document that summarizes the results of a testing process. It typically includes information about the objectives of the testing, the scope of the testing, the approach taken, the results of the testing, and any issues or defects that were identified.

- Test Summary Report: A document that provides a high-level overview of the testing effort, including key metrics, findings, and recommendations.

- Test Review Report: A document that summarizes the results of a software testing effort; typically includes an overview of the testing process, the results of the tests, and any issues or defects that were identified during the testing process; also includes recommendations for addressing any identified issues or defects, as well as a summary of the overall quality of the software being tested.

- Test Execution report: A document that details the results of a testing effort; typically includes information about the tests that were run, the test environment, any issues or defects discovered during testing, and the overall results of the testing effort.

- Test Incident Report: A document that provides a clear and accurate record of the incident, including the circumstances leading up to it, the actions taken to address it, and any follow-up actions that may be required.

- Bug/Defect Report: A document that describes a defect found during testing, including details on the nature of the problem, the steps to reproduce it, and any relevant information on its impact or priority.

- Test Closure Report: A document that summarizes the final results of a testing effort, including any outstanding issues or open defects, and provides recommendations for next steps.

- Usability Test Report: A document that summarizes the results of a usability test and provides recommendations for improving the usability of a product.

- Performance Test Report: A document that summarizes the results of performance testing conducted on a software application or system.

- Acceptance Test Report: A document that summarizes the results of acceptance testing; is performed to determine whether a system satisfies the acceptance criteria defined by the customer or end-user.

- Code Review Report: A document that summarizes the results of a code review process. It is typically used as a deliverable to provide feedback on the quality and correctness of the code being reviewed; also includes recommendations for improvement, such as best practices to follow or changes to the code that should be made.

- Test Impact Report: A document that outlines the impact that a change in the software or system under test will have on the testing process.

- Release Notes: A summary of changes and updates made to a software application or product.

It may be noted that some artifacts are redundant or duplicative. For example, if you are already generating a detailed test plan, there is no need for a high-level test strategy document. Also some artifacts may not be relevant to the specific goals of the testing effort. For example, if the main focus of the testing is to identify and fix bugs in the software, then artifacts such as a usability report may not be necessary. In general, the need for test deliverables will depend on the specific goals and objectives of the testing process, as well as the needs and expectations of the stakeholders involved.

Reasons for Having and Not Having Test Artifacts

The need for test artifacts depends on the specific context and goals of the testing effort. Here are some factors that may influence the need for test artifacts:

- Regulations or industry standards: In some cases, there may be regulations or industry standards that require the production of specific test artifacts. For instance, in the aerospace, nuclear, healthcare industries, there are strict requirements for testing and documentation, and this may require the production of detailed test reports.

- Project requirements: The requirements of the project or product being tested may also dictate the need for specific test deliverables. For instance, if the project requires a high level of quality and reliability, more comprehensive test deliverables may be necessary to ensure that all aspects of the product have been thoroughly tested.

- Stakeholder needs: Different stakeholders, such as developers, project managers, and customers, may have different needs and expectations when it comes to test deliverables. For instance, developers may need detailed reports to help them understand and fix any issues that are identified during testing, while customers may be more interested in summary reports that highlight the overall quality and reliability of the product.

- Testing goals: The goals of the testing effort will also influence the need for test deliverables. For instance, if the goal is to identify and fix as many issues as possible before the product is released, more detailed and comprehensive test artifacts may be necessary. On the other hand, if the goal is simply to ensure that the product meets a minimum level of quality, fewer and less detailed test artifacts may be sufficient.

Here are several reasons why an organization may not need all test artifacts for a particular project. Some of the factors that may influence this decision include:

- The complexity of the project: If the project is relatively simple, with few features and a limited scope, it may not be necessary to produce all of the test artifacts that would be required for a more complex project.

- The resources available: If the organization has limited resources, it may not be practical to produce all of the test deliverables that would be required for a more comprehensive testing effort.

- The level of risk: If the project carries a high level of risk, it may be necessary to produce more test artifacts in order to thoroughly test the system and ensure that it meets all of the required specifications.

Generally it is important to carefully consider the specific goals and requirements of a testing effort when determining which test deliverables are needed. By focusing on the deliverables that are most important for achieving the goals of the testing effort, it is possible to ensure that the testing effort is as effective and efficient as possible.

In conclusion, it is not always necessary to have all test artifacts in order to ensure the quality of a product. While it is important to have a thorough testing process in place, it is also important to prioritize and focus on the most important and relevant artifacts. Ultimately, the goal should be to identify and fix any defects or issues as efficiently as possible, without unnecessarily increasing the scope or complexity of the testing process.

Read also: Documentation for Software Startups

Categories

About the author

Share

Need a project estimate?

Drop us a line, and we provide you with a qualified consultation.