Google Antigravity: What It Is & Why Every Developer Needs It

Google has released what appears to be a significant step forward in AI-assisted development—an autonomous coding assistant designed to handle complete development workflows.

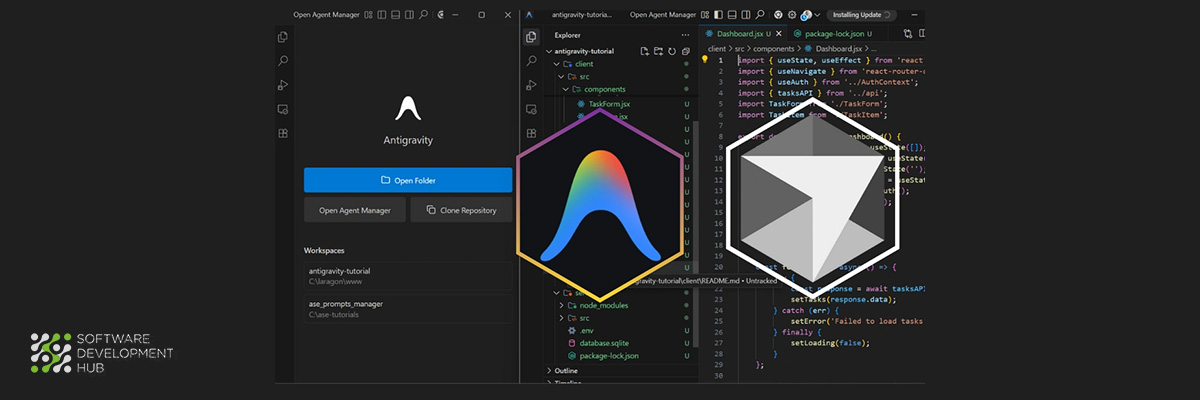

The distinction between Antigravity and conventional coding assistants becomes clear when we examine their approaches. Where existing tools primarily offer autocomplete suggestions and code snippets, Google Antigravity operates as an 'agent-first' platform that positions AI as an autonomous participant in the development process. Released alongside Gemini 3 in November 2025, this AI-powered integrated development environment allows developers to assign complex coding tasks to autonomous agents powered by Google's Gemini 3 Pro model.

We should examine everything you need to understand about Google Antigravity, from its core functionality to practical applications that may influence your development workflow in 2025 and beyond.

What is Google Antigravity?

"Antigravity represents a transformative evolution in IDE technology, steering development environments toward an agent-centric future. With its advanced browser control capabilities, asynchronous interaction patterns, and agent-first architecture, the platform empowers AI agents to independently conceptualize and execute complex software development tasks from start to finish." — Google Antigravity Team, Official Google Product Team, Google

Google Antigravity represents a departure from conventional development environments—a platform built specifically for an agent-first future where AI assumes roles beyond simple coding assistance. Launched alongside Gemini 3 in November 2025, this tool reflects Google's perspective on how developers will collaborate with increasingly capable AI systems.

A new kind of AI-powered IDE

How does an agentic development platform differ from traditional IDEs? Google Antigravity enables developers to operate at a higher, task-oriented level rather than focusing on individual lines of code. The platform inverts the conventional relationship—instead of embedding AI capabilities within a traditional IDE, Antigravity embeds your development surfaces (editor, terminal, browser) within the agent's workflow.

The architecture provides two distinct interaction modes:

- Editor View: A state-of-the-art, AI-powered IDE experience with familiar tab completions and inline commands for synchronous workflows

- Manager Surface: A dedicated interface for spawning, orchestrating, and observing multiple agents working asynchronously across different workspaces—essentially "mission control" for your AI workforce

This dual approach allows seamless transitions between hands-on coding and delegating entire workflows to autonomous agents. The platform enables these agents to plan multi-step tasks, execute them across multiple environments simultaneously, and verify their own work without constant human oversight.

How it differs from traditional coding tools

Traditional AI coding assistants like GitHub Copilot primarily function as sophisticated autocomplete engines—predicting your next line of code, suggesting function implementations, and answering questions about your codebase. They operate reactively, waiting for your direction and responding to your prompts.

Antigravity takes a different approach by giving agents direct access to the editor, terminal, and browser, allowing them to work autonomously across these surfaces. This architectural shift enables what Google calls "agent-first development," where AI can handle complex, end-to-end software tasks independently.

The platform introduces an innovative documentation system through what Google terms "Artifacts"—task lists, plans, screenshots, and browser recordings that verify both completed work and future steps. These artifacts create a natural audit trail and make it easier for users to verify the agent's actions compared to reading through logs of model actions and tool calls.

The role of Gemini 3 and other models

Antigravity draws its capabilities from Google's Gemini 3 Pro model, which provides the advanced reasoning, tool use, and agentic coding capabilities that drive the platform. The system integrates tightly with Google's latest Gemini 2.5 Computer Use model for browser control and their Nano Banana (Gemini 2.5 Image) model for image editing.

The platform also offers model flexibility by supporting:

- Anthropic's Claude Sonnet 4.5

- OpenAI's GPT-OSS

This model optionality provides developers with choices while maintaining generous rate limits for Gemini 3 Pro usage. According to Google, these rate limits refresh every five hours, and only "a very small fraction of power users" will ever reach them.

Google Antigravity is available in public preview at no charge for individuals, with compatibility across Windows, macOS, and Linux. The platform positions itself as the foundation for software development in what Google envisions as the emerging era of autonomous coding agents.

How to Set Up Google Antigravity

Setting up Google Antigravity requires minimal preparation time. Understanding the installation options and configuration choices, however, will help you maximize this coding tool's potential from the first use.

Setting up Google Antigravity requires minimal preparation time. Understanding the installation options and configuration choices, however, will help you maximize this coding tool's potential from the first use.

System requirements and supported platforms

Google Antigravity currently supports three major operating systems with specific version requirements:

- macOS: macOS 12 (Monterey) or newer, with Apple Silicon processors only (M1/M2/M3/M4). Intel processors are not supported.

- Windows: Windows 10 or later (64-bit only).

- Linux: Systems with glibc >= 2.28 and glibcxx >= 3.4.25, including Ubuntu 20+, Debian 10+, Fedora 36+, and RHEL 8+.

During the current public preview period, Google Antigravity is available at no charge for individual use, offering generous rate limits for Gemini 3 Pro usage.

Installation steps for Windows, Mac, and Linux

The installation follows a straightforward process across all platforms:

- Visit the official Google Antigravity download page (antigravity.google/download)

- Select the appropriate installer for your operating system

- Run the installer and follow the on-screen instructions

- Configure your initial preferences during first-time setup

Linux users can utilize package managers for simpler installation. Ubuntu/Debian users can add the Google repository and install via apt, while Fedora/RHEL users can utilize DNF.

Choosing your development mode: agent-assisted vs. agent-driven

The platform requires you to determine how much autonomy to grant the AI agent upon first launch. Google Antigravity offers three primary development modes:

- Agent-driven development: The "autopilot" option where the AI writes code, creates files, and runs commands automatically based on your instructions.

- Agent-assisted development: The recommended balanced approach where you maintain control while the AI helps with safe automations.

- Review-driven development: The most cautious option where the AI asks permission before performing almost any action.

You will also configure two important policies:

- Terminal Execution Policy: Controls whether the agent can automatically run terminal commands

- Review Policy: Determines when the agent needs your explicit approval before proceeding

Linking your Google account and selecting a model

The final configuration steps include:

- Sign in with your Google account (currently works best with personal Gmail accounts during preview)

- Select your preferred AI model - Gemini 3 Pro is the default option, though Claude Sonnet 4.5 and GPT-OSS are also available

- Optionally import VS Code settings or start fresh

Antigravity will initialize your workspace and download necessary components, typically taking 2-3 minutes on most systems. Once complete, you gain access to Google's agentic development platform across your editor, terminal, and browser environments.

Exploring the Interface: Agent Manager, Editor, and Browser

Google Antigravity presents a fundamentally different interface architecture designed around agent-driven development. The platform restructures traditional development environments by embedding development surfaces within agent workflows rather than the conventional approach.

Agent Manager: Mission control for your agents

The Agent Manager functions as a centralized control system for managing multiple AI agents simultaneously. This dashboard enables the orchestration of agents working asynchronously across different project environments, eliminating the linear limitations of conventional chat-based interfaces.

Key components within the Agent Manager include:

- Inbox - Centralizes conversation tracking with real-time task status and agent progress monitoring

- Start Conversation - Initiates new agent task assignments

- Workspaces - Provides project environment management capabilities

- Playground - Offers experimental space for testing before workspace deployment

This architecture addresses the synchronous limitation inherent in previous AI coding assistants. Developers can now deploy multiple agents to address different issues or features concurrently, effectively scaling development capacity.

Editor View: Familiar layout with AI enhancements

The Editor View preserves the familiar development environment structure with VS Code-compatible interface design. This approach ensures existing development workflows remain accessible during the transition to agent-based development.

The AI-enhanced capabilities include:

- Inline Commands - Direct code highlighting with agent instruction capabilities

- Agent Side Panel - Integrated communication with AI assistant without context switching

Browser integration and testing workflows

Browser integration represents one of Antigravity's distinctive capabilities. Agents can invoke specialized browser subagents equipped with interaction tools including clicking, scrolling, typing, and page analysis functionality.

These subagents capture pages through DOM analysis, generate screenshots, and record interaction videos—producing detailed artifacts that document the complete agent workflow. This enables comprehensive end-to-end testing where agents write code, launch localhost through terminal commands, and verify application functionality independently.

Switching between views and managing workspaces

Google implemented separate windows for the Editor and Agent Manager to optimize each interface for its specific function. Navigation between views utilizes interface buttons or the keyboard shortcut Cmd+E.

This separation supports the distinction between direct coding activities (Editor) and high-level task orchestration (Agent Manager). Both interfaces serve complementary roles in what Google positions as asynchronous development methodology.

Key Features That Make Antigravity Stand Out

Google Antigravity introduces several distinctive capabilities that differentiate it from conventional AI coding assistants. The platform's feature set reflects a significant departure from traditional autocomplete-based tools toward a more autonomous development environment.

Multi-agent collaboration and parallel tasking

The platform addresses a fundamental limitation of existing AI assistants: sequential task processing. Antigravity allows you to spawn multiple agents simultaneously across different workspaces. This architecture enables developers to assign documentation research to one agent while another handles code refactoring—essentially parallel processing for development tasks. The mission control dashboard provides visibility into these concurrent work streams, displaying agent status and any pending approval requests.

Artifacts: Task plans, diffs, and walkthroughs

Rather than producing simple text outputs, Antigravity generates structured deliverables called Artifacts that document the development process:

- Task lists and implementation plans that outline the approach before code execution

- Code diffs that specify exactly which lines will be modified

- Screenshots capturing UI states before and after changes

- Browser recordings that document dynamic interactions

These structured outputs provide better visibility into agent actions compared to raw tool calls or execution logs.

Fast vs. Planning mode

The platform offers two operational approaches based on task complexity:

- Fast mode executes tasks immediately—suitable for straightforward changes such as variable renaming or bash command execution when rapid iteration is required

- Planning mode generates detailed implementation plans before execution—appropriate for complex research tasks, architectural changes, or collaborative work requiring thorough preparation

Agent memory and contextual awareness

Antigravity implements learning as a core system capability. Agents both retrieve information from their knowledge base and contribute new insights back to it. This bidirectional knowledge flow allows agents to retain code snippets, architectural patterns, and successful problem-solving approaches from previous tasks—creating a continuously improving AI partner.

Real-World Use Cases for Developers

"Autonomously, an Antigravity Agent writes code for a new frontend feature, uses the terminal to launch localhost, and actuates the browser to test that the new feature works." — Google Antigravity Team, Official Google Product Team, Google.

The practical applications of Google Antigravity reveal its potential for addressing common development challenges. These use cases demonstrate how this AI-powered platform handles real-world scenarios that developers encounter daily.

-

Build a full-stack web app with one prompt

Complete application generation becomes possible through detailed prompts. A developer might specify requirements like "1-day technical conference site with 8 talks, speaker profiles, and search functionality using Python and Flask". Antigravity handles the entire development cycle—from initial planning through implementation and validation—creating all necessary files, installing dependencies, and launching the server for immediate review.

-

Generate and test unit tests automatically

Testing workflows benefit significantly from Antigravity's code analysis capabilities. The platform examines existing code, generates appropriate unit tests with mock implementations, and validates their functionality. This proves particularly valuable when dealing with external services requiring complex mocking, as the agent creates necessary test stubs and executes verification automatically.

-

Debugging with browser walkthroughs

The platform's browser integration enables direct interaction with applications during debugging. Agents can execute code within Chrome, test functionality, capture screenshots or screen recordings, and identify issues. The system allows developers to add Google Docs-style comments on these artifacts to guide future improvements.

-

Creating dashboards and productivity tools

Dashboard development represents another practical application where Antigravity excels. Developers can create sophisticated finance risk visualization tools with interactive controls across multiple pages, complete with sliders for time horizons and modern fintech UI components—all generated from a single detailed prompt.

Conclusion

Google Antigravity presents a notable development in software development tooling. Throughout our analysis, we've examined how this platform shifts coding from manual processes toward agent-orchestrated workflows where AI handles complex implementation tasks with reduced human intervention.

The progression from traditional coding assistants to autonomous agents represents a meaningful change in development methodology. Antigravity's multi-agent capabilities across different workspaces should increase developer throughput compared to existing single-threaded AI tools.

What distinguishes Antigravity is its architectural approach to the development environment. The platform's dual-view design enables switching between direct coding and task delegation, addressing different workflow requirements within a single system.

The browser integration capabilities stand out as particularly valuable for end-to-end testing scenarios. Agents can write code, launch applications, and verify functionality while generating detailed artifacts that document their work—a significant improvement over traditional debugging approaches that require manual verification at each step.

Google Antigravity indicates a direction where AI moves beyond code completion to become a participant in planning, execution, and validation of engineering tasks. For building full-stack applications, generating test suites, debugging through browser interactions, or creating dashboard interfaces, this tool enables developers to operate at a more strategic level.

The public preview provides an opportunity to evaluate this agent-first approach firsthand. While the platform continues to develop, Antigravity already demonstrates how AI can alter the relationship between developers and code, allowing focus on architecture and problem-solving while agents manage implementation details.

Categories

About the author

Share

Need a project estimate?

Drop us a line, and we provide you with a qualified consultation.